by M. J. SALVATI

Measuring the performance of state-of-the-art equipment, fully and with commensurate accuracy, normally requires about $20,000 worth of lab-grade test equipment. Fortunately, you can make a number of performance checks on amplifiers and preamps with just a few pieces of low-cost test equipment.

The key to this apparent minor miracle is the phrase "performance check." The procedures described here, adapted from IHF test procedures, will not always be sufficiently accurate to qualify as valid specification measurements, but they will be accurate enough to tell you if something is wrong and, in many cases, will come close to lab-grade accuracy. By not reaching for maximum accuracy in every measurement or verifying every spec, you can make most of these measurements with just a decent a.c. voltmeter, an audio generator, load resistors, and a few homemade accessories. (Some measurements described in Part II of this article will call for an oscilloscope, but there is a way around that, as you will see.)

Frequency Response

Frequency response is actually the amplitude response of a circuit or device with regard to frequency. In most cases we want a flat response (i.e., no amplitude variation) over the audio-frequency range. In some cases (noise filters and loudness controls, for example), we do want a certain type of amplitude variation to achieve a particular improvement in perceived sound. Frequency response as measured is the ratio of output amplitude over a range of frequencies to output amplitude at a reference frequency (generally 1 kHz). This ratio is usually expressed in dB, and specified as the worst-case variation(s) over a certain frequency range, e.g., "10 Hz to 50 kHz, ±0.5 dB." Though the dB variation is sometimes omitted, it will not be in a truly accurate spec.

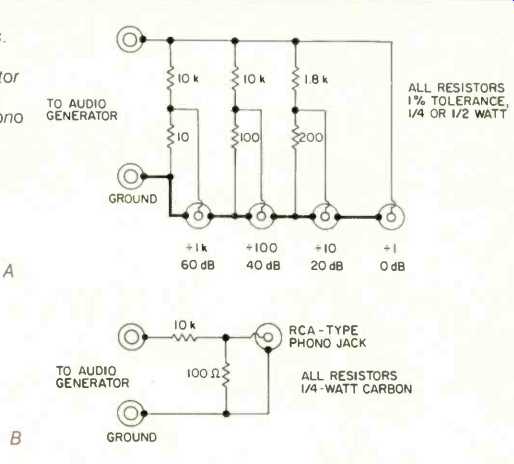

Fig. 1--Decade attenuator circuits. Multi-output precision attenuator (A)

and simple attenuator for phono inputs (B).

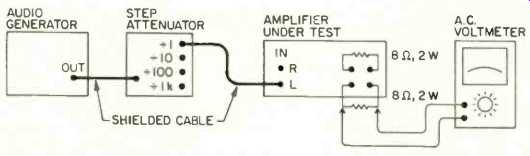

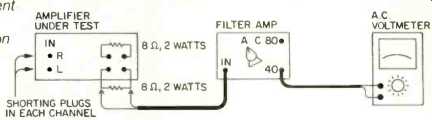

Fig. 2--Basic equipment setup for performance measurements.

Configuration shown is for measuring amplifier or receiver power-output stages; for preamplifier measurements, substitute a 10-kiloohm, 1/4-watt resistor paralleled by a 1,000-pF capacitor for each of the 8-ohm, 2-watt resistances shown.

Equipment Needed. Basically, a frequency-response measurement is made by applying a constant-amplitude input signal to an amplifier or other device, and measuring the output voltage as the input signal's frequency is varied. The equipment needed is simple: Just a signal source and a.c. voltmeter covering the appropriate frequency range, and load resistors. The minimum frequency range over which the signal source and voltmeter must have a perfectly flat response is 20 Hz to 20 kHz, although 10 Hz to 100 kHz is needed in many cases. Fortunately, there are many low-cost signal sources available, called function generators, which have extremely flat output (less than 0.3-dB variation) and sufficiently low sine-wave distortion (less than 1%) over the 10 Hz to 100 kHz frequency range. The B & K 3010 and 3015, Global Specialties 2001, OK Industries FG-201, and Exact 119 are a few examples. Of course, if you have an audio oscillator of lab-grade standard (such as the Krohn-Hite 4200), you need not resort to a function generator for flat output.

The one "problem" with simple function generators is that they generally do not have step attenuators in their output circuits. This makes it nearly impossible to set the 5-mV reference input level for the phono input, and the even lower levels needed for some of the following measurement procedures. Fortunately, there is a way around this, too-an attenuator like those shown in Fig. 1. You can use the 0-dB output for AUX, tuner, or tape inputs, the -40 dB output for moving magnet phono, mike, or tape head inputs, and the -60 dB output for moving-coil phono inputs.

An a.c. voltmeter's flatness is generally included in its accuracy spec. Look for no worse than 2% to 3% accuracy over the 10 Hz to 100 kHz frequency range. If you must use a digital voltmeter (DVM), be certain to check its frequency-response spec in the instruction manual. Many DVMs have poor frequency response above 10 kHz, and some low-cost instruments are not accurate or even usable beyond 1 kHz! For this reason, and also because few DVMs have the much needed dB indication, an old-fashioned analog voltmeter, specifically designed to measure audio voltage, is generally used for frequency-response measurements. Sensitivity is of little importance, since frequency-response measurements are generally made at output levels of 0.5 to 3 V.

If even the modest equipment requirements outlined thus far are beyond your reach, don't despair. There is an alternate measurement technique, described after the basic procedures, which allows accurate frequency-response measurements with really bad test equipment--albeit at the cost of extra time and effort.

The load resistors needed are a pair of 16-ohm, 5%, 1-watt carbon resistors connected in parallel for each power amplifier output, and a 10-kilohm, 5%, 1/4-watt carbon resistor paralleled by a 1,000-pF capacitor for preamp outputs.

Basic Measurement Procedure. If your signal generator and voltmeter both have sufficiently flat frequency response, measure frequency response as follows:

1. Turn on all equipment (Fig. 2), and allow an appropriate warmup time, about 5 minutes for semiconductor equipment and 15 minutes for vacuum-tube equipment.

2. Set any filters, equalizers, and tone, boost, or loudness controls to their flat-response positions, unless you specifically want to measure the effect of one such control on frequency response. Set the amplifier's volume or gain control to minimum, and its balance control for equal output.

3. Connect the appropriate attenuator output (if used) to the input jack of the amplifier being measured. For devices with multiple inputs (such as preamps and receivers), connect to a general-purpose high-level input (AUX, for example), unless you wish to make a specialized frequency plot. Do not use the phono inputs for a general frequency-response measurement. Set the amplifier's mode or function switch to match the input used.

4. Connect the appropriate load(s) to the amplifier's output terminal(s). Connect the a.c. voltmeter across the load resistor of the channel under test.

5. Set the a.c. voltmeter range switch to accommodate the reference output level. The IHF mandates 0.5 V for preamps, and the voltage corresponding to 1-watt output for power amplifiers (2.82 V at 8 ohms, 2.0 V at 4 ohms). However, you can get the same results and save yourself a lot of conversion calculations by using voltage values which correspond to your meter's 0-dB mark. On most a.c. voltmeters, these correspond to 0.775 V (on the meter's 0-dB range), suitable for preamps, and 2.45 V (+ 10 dB range), suitable for power amps. If you have one of the few a.c. voltmeters using the 0 dB = 1.0 V system, use 0.316 V (10 dB range) for preamps, and 3.16 V (+10 dB range) for power amplifiers and receivers.

6. If measuring anything other than a separate power amplifier, set the generator frequency to 1 kHz and adjust its output level to 0.5 V. Turn up the amplifier's gain or volume control until the voltmeter pointer is on the 0-dB meter-scale calibration. Thereafter, do not touch the amplifier's gain or volume controls. If measuring a separate power amplifier, set its level control (if any) to maximum, and use the audio generator's output control to set the 0-dB meter-scale indication at 1 kHz.

7. Set the generator to other frequencies in turn, and record the voltmeter indication in terms of plus and minus variations from 0 dB for each frequency. Make extra measurements around frequencies where the output voltage undergoes rapid change so the "knees" in the response curve can be accurately drawn.

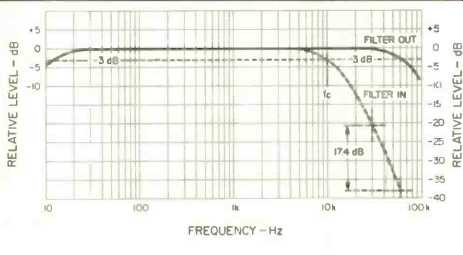

8. Plot the meter readings obtained in Step 7 on semilog graph paper, as shown in Fig. 3. Good-quality audio equipment will produce a flat response that rolls off smoothly at each frequency extreme.

Using Non-Flat Test Equipment. If your audio generator and/or a.c. voltmeter have such poor output flatness and frequency response that they make the plotted response curve look like a design for a roller coaster, redo the measurement with the following modification. Each time you change frequency in Step 7, disconnect the voltmeter from the amplifier output, and re-measure the generator output voltage. Adjust the generator output level control for the output level selected in Step 6, then reconnect the voltmeter to the amplifier output and record the output level. This technique, though time-consuming, cancels out flatness problems in the test equipment. Note, however, that you must use the same voltmeter to measure the amplifier's input voltage that you use to measure its output voltage.

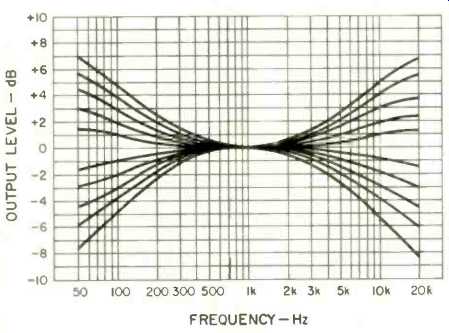

Fig. 3--Frequency response plot for a typical amplifier. Note the 3-dB down

points and the filter slope (dashed curve).

Fig. 4--Typical tone control frequency response plots.

Filters, Equalizers and Tone Controls. When filters and graphic equalizers are inserted, they alter a relatively limited portion of an amplifier's frequency response. The basic measurement techniques apply; the only special consideration is to make more closely spaced measurements in the frequency range where the control has its effect. This will better define the knee or peak (as the case may be) in the response curve.

The rated cutoff or corner frequency of a filter is that frequency at which output amplitude drops 3 dB below its 1-kHz value. For example, the corner frequency of the typical high-cut filter plotted (dashed curve) in Fig. 3 is 10 kHz. To determine, with reasonable accuracy, the roll-off or attenuation rate of a filter, you must make measurements at frequencies where the output is 30 to 40 dB down. In our Fig. 3 example, the response is down 20.6 dB at 30 kHz, and is 38 dB down at twice that frequency (one octave higher). The measured difference of 17.4 dB is close to the 18 dB/octave theoretical attenuation rate of a three-pole filter.

Conventional tone controls, be they stepped or continuous, affect a relatively large portion of an amplifier's response curve. To fully characterize their action, make frequency-response measurements over the range of 50 Hz to 20 kHz (according to the Basic Measurement Procedure) at each step or panel marking. (If you wish, you can go beyond this frequency range, but then the results may be affected by the amplifier's low- and high-end roll-off.) Plot the results on semilog graph paper, as in Fig. 4.

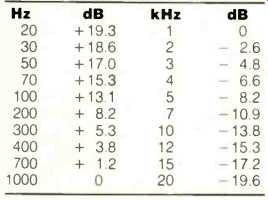

Phono Inputs. Measured directly, the frequency response of an amplifier's phono input will look like a severely tilted curve. This is due to the high frequency attenuation and low-frequency boost characteristics of the RIAA equalization in the phono circuitry. You should therefore plot the difference between the measured response and the playback equalization values given in Table I. (Note that the values in this table must be algebraically subtracted to get the proper sign for the remainder.) For example, suppose you measure -8 dB at 5 kHz. Since the 5 kHz response is supposed to be -8.2 dB, the actual error is only +0.2 dB.

Signal-to-Noise Ratio

Ideally, an amplifier should have no output signal whatsoever when there is no input applied. In reality, noise of some sort (hum, thermal, popcorn, etc.) appears in the output of every amplifier. Signal-to-noise ratio is the strength of the hum and noise appearing in the amplifier's output signal relative to the amplifier's rated output level.

This ratio is normally expressed in dB, and constitutes the S/N ratio spec of most older amplifiers. The S/N ratio of newer amplifiers is the ratio of hum and noise to a standard reference output level, with standardized gain in most cases. Since most amplifiers are rated for output levels higher than the IHF reference levels, the new method results in lower numbers, particularly for high-power amplifiers.

Fig. 5--Simple filter/amplifier (A), with A- and C-weighting networks, for

use with a.c. voltmeters having sensitivities of at least -60 dB (1 mV, full

scale). For less sensitive meters, add the extra gain stage (B).

However, another difference between old and new standards is weighting (i.e., filtering that restricts the bandwidth of the noise being measured.) Most old-standard measurements were made with B- or C-weighted filters, which pass a wider noise bandwidth than the A filter mandated by the current IHF spec. As a result of measuring less of the noise present in an amplifier's output, the S/N ratio is higher for any equivalent noise and reference levels. Because of these differences, there are few valid comparisons possible between S/N ratios made under the old and new measurement procedures.

Equipment Needed. Basically, S/N measurements are made by shorting an amplifier's input terminals and measuring the noise voltage present at the amplifier's output terminals. However, the noise output of modern audio equipment is so low that only a few, expensive a.c. voltmeters can give a readable indication. Therefore, it is customary to amplify the noise with an amplifier whose own noise level is very low. The circuit shown in Fig. 5A is a low-cost, low-noise amplifier having 40-dB voltage gain (100X), and containing the filters for A and C weighting. All capacitor values are in micro farads, and the resistors are 1/4-watt carbon film. The preferred tolerances are specified on the schematic, but ordinary 5%-tolerance resistors and 10% capacitors will provide enough accuracy for practical purposes. The low-cost XR4739 is available from several mail-order houses, but a Precision Monolithics OP-227 (different pin connections) will provide even lower noise performance.

When preceded by the preamplification described, nearly any a.c. voltmeter having a dB scale and range markings can be used. The most sensitive range should be-60 dB (1 mV, full scale) if you expect to work on high quality audio equipment, where the S/N ratios may exceed 110 dB. If you have a low-cost a.c. voltmeter such as the old but popular Heathkit AV-3 (-40 dB, 10 mV, full scale), you might need amplification in addition to that of the basic filter/amp. Another XR4739 (Fig. 5B) can be added to pin 7 of the first XR4739. This will provide an 80-dB gain output in addition to the 40-dB gain output of the basic filter/amp. Furthermore, if the voltmeter's dB scale has its zero referenced to 0.775 V (1 mW into 600 ohms), no dB conversions or difficult calculations will be needed in the following procedures.

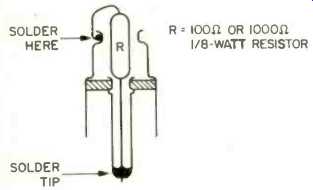

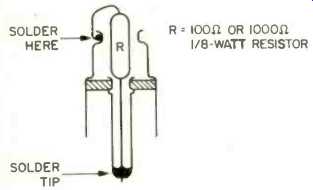

Fig. 6--Input-terminating plug for S/N measurements.

The IHF recommends input terminations via 1-kilohm resistors for most inputs (AUX, tuner, tape, line, moving magnet phono, etc.) and 100 ohms for moving-coil phono inputs. For our purposes you can use the shorting plugs (usually) supplied with your audio equipment for most measurements.

However, input termination is important with some ultra-low-level moving-coil preamps, so you should build a couple of 100-ohm shorting plugs as shown in Fig. 6. Use as small a carbon or metal film resistor as you can obtain; do not use carbon-composition resistors. Keep the leads extremely short on these plugs.

The load resistors needed are a pair of 16-ohm, 5%, 1-watt carbon resistors connected in parallel for each power amplifier output. The input impedance of the filter/amp will provide the proper load for preamp outputs.

Receiver, preamp, and integrated amplifier S/N ratio measurements made to the current IHF procedure also require an audio generator supplying 0.5 to 3 V at 1 kHz, and a decade attenuator (Fig. 1).

Basic Measurement Procedure. To measure S/N ratio relative to rated output (or to reference output, for discrete power amplifiers), proceed as follows:

1. Turn on all equipment (Fig. 7) and allow an appropriate warmup time (about 5 minutes for semiconductor equipment and 15 minutes for vacuum-tube equipment).

2. Insert shorting plugs or terminations into the input connectors of the desired amplifier function (e.g., tuner).

3. Set any filters, equalizers, and tone, boost or loudness controls on the amplifier to their flat-response position.

Set the function or mode selector of the amplifier under test to match the input terminated in Step 2. Set its volume or gain control at maximum, and its balance control for equal output.

4. Connect the filter/amp 40-dB out connector to the input connector of your a.c. voltmeter, and select the weighting network (A or C) called for by the specification you are checking.

5. If the unit under test is a preamp, connect its output jack (for the channel being measured) directly to the input connector of the filter/amp. If the unit under test is a power amplifier or receiver, connect 8-ohm load resistors across each channel's speaker terminals, then connect the filter/amp input across the load resistor of the channel being measured.

6. Set the voltmeter's range selector for maximum on-scale reading. The measured S/N ratio is the sum of four dB figures: The meter scale, meter range, filter/amp gain, and the output adjustment factor. For our calculations, use the plus or minus signs of the meter scale and range switch as marked.

Consider the filter/amp gain as a negative quantity, output levels above 0.775 V as negative, and output levels below 0.775 V as positive. For example, if we were measuring the tuner input of a preamp with 0.5-V rated output, the meter scale might indicate-5.5 dB when the voltmeter range switch is set to its-50 dB position. Since the output level of 0.5 V is below 0.775 V (0 dBm), we add the 3.8-dB adjustment factor (Table I) as a positive quantity to get S/N ratio at rated output:

(5.5) + (50) + (40) +. (3.8) =-91.7 dB.

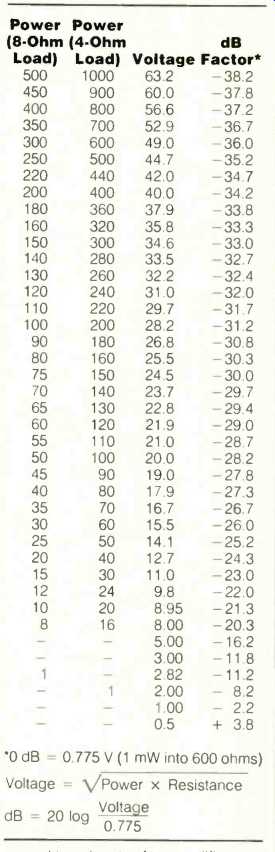

If a power amplifier were being measured, the adjustment factor (for reference or rated output) would certainly be a negative quantity. Adjustment factors for the standard 1-watt reference output and for various rated power output levels are given in Table II.

7. Switch the input of the filter/amp to the other channel's output, and repeat Step 6.

8. Repeat Steps 2, 3, 6, and 7 for every other pair of inputs on the amplifier. Note, however, that the AUX, tuner, and tape inputs of an amplifier generally use the same circuitry and will produce identical results. Inputs with different circuitry include high-level phono, low-level phono, mike, and tape head.

Table 1--Phono correction factors.

S/N Ratio Relative to Reference Output. This is similar to the current IHF procedure for receivers, integrated amplifiers, preamplifiers, and any type of amplifier other than discrete power amplifiers. It is much more complicated than the Basic Measurement Procedure, because the amplifier's gain must be standardized before the actual S/N measurement can be made. To make this measurement, proceed as follows:

1. Set up the equipment as shown in Fig. 2, selecting the attenuator output jack appropriate for the input being measured (0 dB for AUX, tuner or tape, 40 dB for MM phono and mike, 60 dB for MC phono).

2. Turn on all equipment and allow an appropriate warmup time, about 5 minutes for semiconductor equipment and 15 minutes for vacuum-tube equipment.

3. Set any filters, equalizers, and tone, boost or loudness controls on the amplifier to their flat-response position.

Set the amplifier's volume or gain control at minimum and its function or mode selector to match the input being tested. Set its balance control for equal output.

4. Set the audio generator frequency at 1 kHz and its output level at 0.5 V.

5. Turn up the amplifier's volume or gain control until the a.c. voltmeter indicates reference output (0.5 V for preamps, 2.82 V for receivers and integrated amplifiers). After this point, do not touch the amplifier's volume or gain control.

6. Disconnect the attenuator cable from the amplifier input, and insert a shorting plug or terminating resistor into the same input connector.

7. Install the filter/amp between the a.c. voltmeter and output of the amplifier under test (see Fig. 7). Select the A weighting network.

8. Set the voltmeter range switch for maximum on-scale indication. The measured S/N ratio, relative to reference output, is the sum of four dB figures: The meter scale, meter range, filter/amp gain, and output adjustment factor. For our calculations, use the plus or minus signs of the meter scale and range switch as marked. Consider the filter/amp gain as a negative quantity. The preamp adjustment factor is +3.8 dB; the power amplifier and receiver adjustment factor is -11.2 dB for 8-ohm loads.

9. Switch the input of the filter/amp to the other channel's output, and repeat Step 8.

10. Repeat Steps 1 to 9 for every other pair of inputs you wish to measure. Note, however, that the AUX, tuner, and tape inputs of an amplifier generally use the same circuitry and will produce identical results. Inputs with different circuitry include high-level phono, low-level phono, mike, and tape head.

Table II--Power, voltage, and dB conversions.

Fig. 7--Equipment setup for SIN measurements on power-output stages.

For preamp measurements, change output loads as in Fig. 2.

Sensitivity

This specification, too, has undergone a significant change in recent years. In the old days, sensitivity was defined as the minimum input voltage needed to develop rated amplifier output (either voltage or power) when applied to a certain input connector. The current IHF standard defines sensitivity as the minimum input voltage needed to produce the appropriate reference output level. There isn't much difference between the two standards for preamps, since the typical rated output voltage for preamps was only about twice the current 0.5-V reference level. However, most power amplifiers and receivers have rated outputs in the 30 to 200 watt range, many times the 1-watt reference output level now used for power amps. The result is that their sensitivity figures now look much better (lower), especially in the case of high-power amplifiers and receivers.

Equipment Needed. Sensitivity measurements require a sine-wave signal source (audio oscillator or function generator) at 1 kHz with output amplitude controllable over a range from 0.1 mV to 1 V, and an a.c. voltmeter capable of measuring those levels at 1 kHz.

Though low-cost function generators do not have output-level controls capable of easy operation below a few hundred millivolts, and low-cost a.c. voltmeters cannot measure levels below a few millivolts with any degree of accuracy, very low-level sensitivity measurements are still possible. By inserting an attenuator of known division ratio between the generator and amplifier, you can set the generator's output at easily controlled levels 10 to 1,000 times higher than the levels actually fed to the amplifier. The attenuator shown in Fig. 1A provides up to 3 decades of precision attenuation, with outputs ranging from 0.1 mV to 2 V, all from an input voltage that need be controlled at the generator only over a range from 100 mV to 2 V. Of course, if your generator does have a wide range output attenuator, you might need only a single 40-dB attenuator (Fig. 1B) for very low-level phono inputs. In general, though, you might use the 0-dB output for low-power amplifiers and receivers, the -20 dB output for high-power amplifiers and the AUX, tuner, and tape inputs of preamps, the 40 dB output for low-level inputs (phono, mike, tape head), and the 60 dB output for very low-level moving-coil phono inputs.

Load resistors for reference-output sensitivity measurements are the same as used for frequency-response measurements because of the low power levels involved. However, if you are going to measure power amplifier or receiver sensitivity at rated power output, the load resistors must be capable of dissipating possibly huge amounts of power. This means large wire wound resistors mounted on large sheet-aluminum heat-sinks. You will need at least one 8-ohm, 5% tolerance resistor. If any of your power amplifiers or receivers is specified at 4 ohms, obtain two 8-ohm resistors. These can be connected in parallel to make a 4 ohm resistor, or used separately for other measurements where both channels must be driven.

Measurement Procedure. To measure the sensitivity in a particular input mode, proceed as follows:

1. Turn on all equipment (Fig. 2) and allow an appropriate warmup time, about 5 minutes for semiconductor equipment and 15 minutes for vacuum-tube equipment.

2. Set any filters, equalizers, and tone, boost or loudness controls on the amplifier to their flat-response position.

Set the amplifier's volume or gain control at maximum, and its balance control for equal output in each channel.

3. Set the audio-generator output level control for minimum output.

4. Connect the generator output to the attenuator input, and the appropriate attenuator output jack to the desired input jack of the amplifier being measured. Set the amplifier's function or mode selector to match the input being used.

5. Connect the appropriate load(s) across the amplifier output terminals.

Connect the voltmeter input terminals across the load resistor of the channel being measured.

6. Set the a.c. voltmeter range selector to the position covering the appropriate reference level (0.5 V for preamps and 2.82 V for power amps and receivers). However, if you are measuring sensitivity at rated output, set the range selector to a position covering the rated output voltage (preamps) or the voltage level corresponding to rated power output (power amps and receivers). The conversion chart in Table II will be helpful in this case.

7. Set the audio generator frequency at 1 kHz, then increase its output level until the voltmeter indicates reference or rated output. (You may have to change the amount of attenuation to do this.)

8. Disconnect the a.c. voltmeter from the load resistor and measure the generator output voltage. Divide this voltage by the attenuator's division ratio to get the sensitivity figure for that input. (Now you see why we needed 1% resistors.) For example, if we measure 1.2 V and are using the 20-dB (divide-by-10) attenuator, the input sensitivity is 0.12 V (120 mV).

9. Repeat Steps 3 to 8 for the other channel.

10. Repeat Steps 3 to 9 for the other inputs.

Note, however, that the AUX, tuner, and tape inputs of a preamp or integrated amplifier generally use the same circuitry and will produce identical results. Inputs with different circuitry include high-level phono, low-level phono, mike, and tape head.

In Part II, I'll describe how to measure damping factor and maximum output. I'll also show how to build a simple indicator which can substitute for an oscilloscope for certain tests.

Also see: Part 2

(adapted from Audio magazine, Feb. 1984)

= = = =