By David Ranada

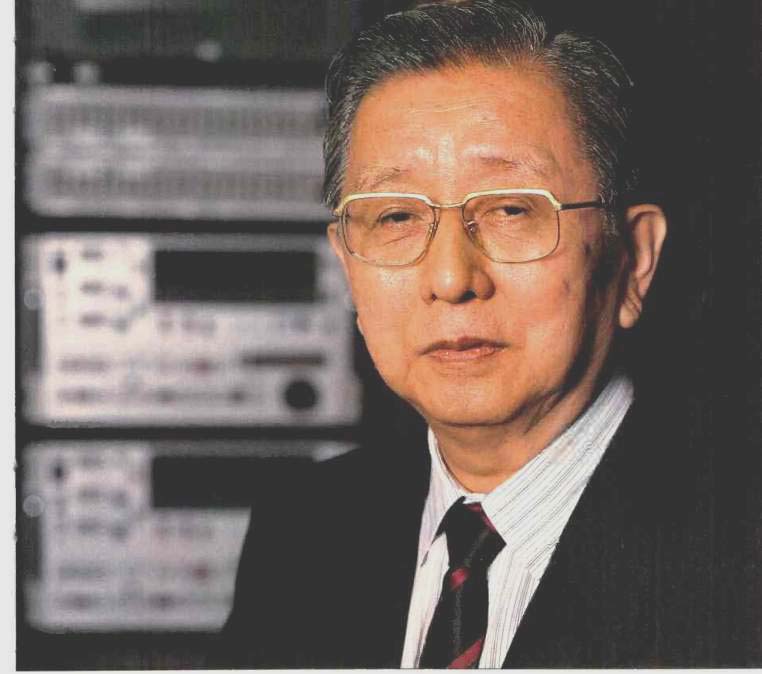

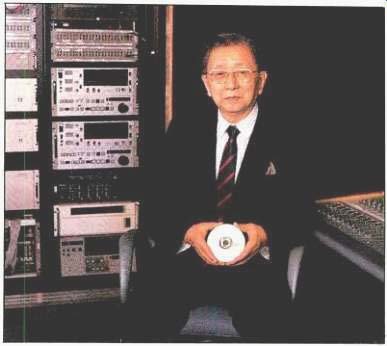

[Dr. Heitaro Nakajima is one of the founding fathers of digital audio, having been a member of the team responsible for the development--amid formidable technical and bureaucratic difficulties--of the first digital audio recorder at the Japanese Broadcasting Corp. (NHK) in 1967. That machine was a 12-bit monster with a 30-kHz sampling rate, a far cry from today's DAT recorders with their single-chip, 16-bit oversampled converters and 48-kHz basic sampling rate.

From that first recorder through Sony's work on the CD system and DAT, Nakajima has seen it all and reminisces about his long career in digital audio in this interview. D.R.]

Tell us how you started your career.

After my graduation from college in 1947, I joined NHK, the Japanese Broadcasting Corporation, where I began my initial research with microphone and loudspeaker design for broad cast use.

I take it that your background in these areas is quite scientific and technical?

That is correct. In fact, as an outgrowth of this research, NHK introduced a reference studio monitor speaker, and later this initial research also led to Sony's development of the C37 and C39 microphones.

I also understand that you were involved with the very first digital recordings done by NHK.

How were these conducted?

We began our work with digital recording at NHK in 1965, just after the conclusion of the Tokyo Olympics. My group was actually responsible for developing the world's first digital tape recorder, which was introduced in Tokyo in 1967. It operated at a sampling rate of 30 kHz, with 12-bit quantization.

This seems to be a very early period in the development of digital audio technology.

In retrospect, our work in digital audio was very advanced compared to the rest of the industry. Because, frankly speaking, at that time our team had already completed our transducer research, so we were quite ready for a much more formidable challenge.

What motivated NHK to get involved in digital audio at that time?

You must first understand that in 1964, the Japanese broadcast industry considered the Tokyo Olympics to be a true milestone in our country's history. Therefore, much research and development work was focused on making the broadcasts of these Olympics as memorable an experience as possible.

In addition, we had recently achieved another milestone with the introduction of FM stereo broadcasting. So by this time, we had already realized that the quality of our analog master recorders was not good enough to serve as a reference source for high-quality FM transmissions. These were the factors behind NHK's early involvement in digital.

It goes without saying that moving from analog to digital recording was a fairly radical change in the way that broadcasters approach their business. In this regard, do you specifically remember any other experiments in other parts of the world that coincided with NHK's efforts?

As far as I can remember, there were no other activities in digital recording anywhere else at that time. In fact, the only other group interested in digital audio at all was the BBC, who, if I correctly recall, began experimenting with it around 1969.

Did you really expect digital audio to come so far in such a relatively short time?

Not at all. When you consider that our first digital recorder was large and cumbersome, cost at least five times more than conventional analog tape recorders, and didn't even include a provision for editing, many people were extremely curious as to why NHK was interested in digital audio at all at this time.

If this was the prevailing opinion, why indeed did NHK move in this direction? Was it the quality of digital audio sound?

In some ways, significantly so. For although much research still remained to be done, even with our first recordings I was immediately taken by the incredible improvements in dynamic range and surface noise versus analog. Without this initial impression, I'm afraid I could not have been motivated to continue my research in digital audio for another 20 years.

In 1971, approximately five years after your initial work in digital audio with NHK, you joined Sony. Is this when it first occurred to you that it would be possible to bring digital audio sound to the consumer?

Yes. By the time my first anniversary at Sony had passed, there were already 40 research and development engineers in my division. It was at that time that decided to assign two of these engineers the task of beginning applied research into the fundamentals of digital audio. Yet I did this without establishing any ultimate purpose or specific product goal.

This was primarily based on my belief that digital technology would ultimately prove to be the foundation for some type of new recording system that was then unavailable for use with any analog product. And it is this belief that ultimately became the motivation for our original group of digital engineers.

I take it, then, that as your engineers continued to refine their work in digital audio, the impetus for new Sony product developments naturally began to follow.

Yes, that's correct. For example, we discovered initially that a conventional stationary-head recorder did not offer quite enough bandwidth to record digital information efficiently. Therefore, we decided to redesign a helical-scanning head to record the data instead.

As a result of this development, we invented dedicated pulse-code modulation [PCM] processors like the PCM-1600 and PCM-1, which were designed to be used with already existing U-matic or Betamax video recorders.

When I joined the industry in 1979, technology was also under development to bring digital audio to the consumer via optical disc. How did your work in this area begin?

Our work in this area began with our preliminary research into optical videodisc technology. This was followed by successfully recording high-density digital audio data onto a videodisc. All of this led us to conclude that virtually any optical format that could record video could be easily modified to store digital audio instead.

I remember that an early Sony prototype for an optical digital audio disc was 30 centimeters in diameter and provided many hours of digital audio music. Was it Sony's co-developer of the Compact Disc format, Philips, that actually proposed a very small digital disc?

We began our research from the traditional analog perspective of a 12-inch format that would store up to one hour of recorded music. however, as our digital research continued, we realized that we could actually achieve up to 13 hours of recording time on an optical disc of this diameter.

My conclusion was that since 13 hours of prerecorded music would not be necessary, a one-hour music playback format could be achieved on a disc only 9 centimeters in diameter. Later we learned through our discussions with Philips that their initial proposal for the Compact Disc format called for a disc 11.5 centimeters in diameter.

So Philips' first proposal for the CD standard was larger than 9 centimeters but smaller than the present CD?

That is correct. The current CD format specifies a disc that is 12 centimeters in diameter, while Philips' original proposal called for an 11.5-centimeter disc.

It is also my understanding that Philips' initial proposal provided a shorter playing time as well as a different signal resolution and error-correction system than is specified in the final CD standard. How did all of this finally evolve into the present Compact Disc system?

The key to the Philips proposal was that they based their approach primarily on their extensive experience in optical videodisc technology rather than their experience in digital audio processing. This is why they recommended a digital converter system that only provided 14-bit music resolution.

On the other hand, our Sony team approached CD from a totally different perspective and therefore based our recommendations on our developments in PCM digital audio.

If Philips recommended a 14-bit system, how did the two companies finally agree on the 16-bit CD format?

As a matter of fact, Philips insisted on 14-bit quite strongly. However, I had two responses:

First, from a technical point of view, although a 16-bit D/A converter was not available at that time except for professional use, I believed that in the future it would not be that much more difficult to produce a 16-bit LSI than a 14-bit converter. Even more important, from a musical point of view, once CD listeners would become accustomed to the limitations of 14-bit sound quality, they would demand even higher resolution than a 16-bit system. Today, high resolution still remains an issue in the music industry, with engineers now insisting on 20-bit systems for professional use.

There is a story circulating that the playing time on a CD was increased to accommodate Beethoven's Ninth Symphony. Is this true?

Well, Sony believed that if we could accommodate Beethoven's Ninth Symphony, there was enough room to store other long musical works as well. This was another way that we helped to convince Philips to expand the diameter of the CD to 12 centimeters.

Another way we convinced them was to measure the size of a person's coat pocket. We studied a wide variety of pockets and found that most of them measured about 14 centimeters in width, so we concluded that a 12-centimeter CD in a jewel box would not be a problem.

This is a difficult question, but what do you think are the CD's weak points now that the system has evolved? And are there any technical "loose ends" that you had wished had been resolved in another manner?

Taken as a whole, I believe that Compact Disc is a remarkably convenient, high-quality consumer digital audio format, particularly in regards to other current music configurations.

But with the recent development of solid-state memory devices at far lower prices, isn't it possible to design far more powerful error-correction systems for more consistent playback performance?

At one time, the development of such devices was looked upon as a viable option. However, today's higher quality CD replication and laser pickup designs generally ensure more uniform performance, without additional manufacturing or LSI production expenses.

As you pointed out, there are now a number of 20-bit systems in the professional audio market.

Some people say that the CD should become an 18-bit format. What is your opinion?

While I technically acknowledge these opinions, at the same time, if CD is to truly remain a viable consumer music format, it should last until at least the end of this century. In other words, just as the 78-rpm record lasted 25 years and the LP lasted 25 years, so I expect the CD to last for 25 years as well. Maybe by the year 2007, we should expect the next generation of music enthusiasts to develop another revolutionary digital audio format.

Speaking of advancements, DAT was big news in this country for a while because of the controversy surrounding its introduction. Was DAT in tended to ultimately replace analog cassette, or was it truly meant to be a product for the high-end music enthusiast?

Although this might not be 'a direct answer to your question, according to my philosophy a recording version of a prevailing technology must be designed to coexist with the technology's prerecorded software. In the case of Compact Disc, which follows a 44.1-kHz, 16-bit format, there must also be a recorder that operates at the same parameters. By the same to ken, if there is a 48-kHz, 16-bit digital broad cast, there must also be a 48-kHz, 16-bit recording system.

Taking this point of view, it's quite easy to see that DAT was designed to serve a variety of music enthusiast applications.

The Digital Compact Cassette and Mini Disc are now being touted as the ultimate replacement for the analog cassette. Do you think consumers will be confused with these new formats, since their development so closely follows the introduction of DAT?

Both MD and DCC offer suitable digital performance to meet the requirements for portable or mobile music reproduction. Therefore, since these applications do not require full 16-bit linear digital processing, we can make the media both smaller and less expensive.

This difference in intended use is the primary distinction between MD and DCC, versus CD and DAT.

Mini Disc seems to be a very radical departure in signal processing compared to everything that has come before.

Given your strong feelings on linear 16-bit encoding, do you feel that data compression is destined only for portable audio or those applications that only require limited dynamic range?

Personally, I believe that these data-compression encoding algorithms will continue to be improved in the future. However, if there is an obvious difference between 20-bit linear and 16-bit linear systems, can you imagine the difference between 16-bit linear encoding and data encoded with only one-fourth to one-fifth the amount of information? Therefore, for the critical listeners, despite the likelihood of expected improvements in data compression schemes in the future, there will always be some audible difference between data-compressed encoding and properly de signed linear encoding systems.

So you believe that 16-bit or 20-bit linear systems will continue to be used for high-quality recording?

Yes. I believe that linear digital formats must continue to exist, in order to ensure the highest quality master recordings possible. So at the very least, professional digital recording will continue to improve, based on additional refinements in the linear encoding process.

Digital audio has certainly come a long way in eliminating almost all of the major problems traditionally associated with analog recording and playback. Are there any aspects of digital sound reproduction that you feel still need improvement?

Although digital audio solves many problems inherent in analog signal processing, many people still believe that Compact Disc sound is too analytical or "cold." Therefore, I believe that there is still room to subjectively improve overall digital sound quality in such critical areas as the design of the A/D converter, the Compact Disc player's drive mechanism, and the player's power-supply circuitry.

But in the future, most of my research will center on new discoveries in the field of psycho acoustics, with particular emphasis on the way human beings inter act in the listening room environment.

Do you think audio technology will ever improve in its ability to convey the live music experience?

I believe it will remain quite difficult for us to ever achieve this so-called "musical reality.

For although we are improving our ability to simulate L key sound-field characteristics with more sophisticated digital signal-processing techniques, it must always be remembered that these are only simulations, with far fewer aural cues than the actual live musical experience. While we may be getting closer, we're nowhere close to actually "being" there.

(adapted from: Audio magazine, Jul. 1992)

Also see:

The Audio Interview: George L. Augspurger--Designs by Perception (Apr. 1992)

The Audio Interview--Jack Pfeiffer: RCA's Prince Charming (Nov. 1992)

The Audio Interview--Henry Kloss--Distilling the Elements (Feb. 1992)

= = = =