by RICHARD S. BURWEN

How does 16-bit digital recording compare with high-quality analog recording for live and studio use? Very well, and noticeably different, I find from my experience with Sony's PCM-F1 digital audio processor and SL-2000 portable videocassette recorder.

For the past three years, I have been making four-channel analog recordings with 110 dB of unweighted dynamic range. These recordings were all made on quarter-inch and half-inch tape at 7 1/2 and 15 ips using the Burwen Model 2000 Companding (3 dB/ dB) Audio Processor.

Accustomed to such noise-free analog recordings, I wondered what it would be like to work with a digital system, considering that it would give me just two channels, and only 93 dB of unweighted dynamic range. (That's the 16-bit figure; 14-bit operation didn't interest me.) I acquired two of the Sony systems. Now that I have made over 75 digital cassettes with this outfit, my experiences may be helpful to others who are just starting out in digital recording.

Since the VCR's input signal is passed through to its output jacks during recording, the PCM-F1 lets you monitor the action of the digital circuitry, first encoding the signal digitally then decoding it back to audio. Except for any uncorrected errors that might occur during playback, the output signal during recording is exactly the same as the playback signal. While running a variety of my "noise-free" analog tapes through this process, the only difference I heard between the input signal and the system output was additional noise, 93 dB down.

The monitoring system caused a problem in live recording, though.

Stopping the machine after playback in the studio, I nearly blew myself sky-high with feedback into the micro Richard S. Burwen is President of Burwen Technology, Lexington, Mass., and inventor of the Dynamic Noise Reduction (DNR) system made in IC form by National Semiconductor.

His pop and click reducer, Model THE 7000 transient noise eliminator, is one of the best of its kind, phones from my 20,000-watt sound system (Audio, April 1976). I had not realized that the PCM-F1 delivers its monitor signal even when it’s not recording or playing. This is convenient when you are wearing headphones while recording, but with speakers in the same room as the microphones, watch out! At first, fractional-second losses of signal occurred on both my digital systems. Cleaning the heads did not completely solve the problem. From Bert Whyte and my own experiments, I learned how to avoid dropouts: Use only high-grade videocassettes, up to L-750; avoid the un-recommended Beta-III slow speed; use a head-cleaning cassette, such as Sony's L-25CL, for 40 seconds after every two hours of operation, and for two minutes after every 50 hours, and adjust the VCR's playback tracking control for each cassette, using the tracking level meter on the PCM-F1. Since I began following these rules, the dropout problem has virtually disappeared. Nevertheless, for important live recordings I always use two VCRs, as insurance against a damaged or defective cassette.

The only tape to suffer ticks or static from brief, uncorrected dropout errors was my first. On that, I had used the VCR's pause and slow-motion controls, which make the heads scan one section of the tape repetitively, causing extra tape wear there.

Having heard about loss of ambience, harmonic distortion at low signal levels, and ringing caused by antialiasing filters, I listened carefully to the effect of the PCM-F1 system on room ambience and piano notes dying out.

The only difference I could hear between the input and output was a little noise, slightly more erratic than FM hiss.

Voices and a drum set, picked up by a microphone remote from my monitoring room, were listened to direct and through the PCM-F1, through headphones and speakers. I recorded first at normal level, then 35 dB down, with matching, extra gain in playback.

Again, I heard no difference between the microphone and monitor signals except added noise.

Sine waves, recorded 70 dB down and played back with matching gain, seemed to have picked up only noise, not distortion. But in the 14-bit mode, as the signal approached the noise level, I could plainly hear the noise changing in steps and, at one particular level, quite a bit of distortion. Apparently, in the 16-bit mode, the white noise "dither" signal, added to mask bit-level distortion by randomizing it, works quite well. This also contributes to the system's accurate handling of ambience.

Making simultaneous analog and digital live recordings, I was at first distressed to find that the digital recordings, although very clear, had somehow lost the excitement of the analog tapes. The problem wasn't in the digital recorder (which accurately reproduced what the microphones fed in), but in the analog system's imperfections which, to me, enhanced the music. Although my analog machines have been modified for very flat response, small variations in frequency response remain, affecting both tone quality and dynamics. Bass drum energy at 30 Hz is amplified about 1 dB more than middle high frequencies.

With the 3:1 playback expansion, each beat is emphasized, giving an apparent increase in clarity.

I can simulate this effect by boosting the bass of the digital signal slightly, and passing it through a special volume expander actuated mainly by low frequencies. I can also simulate the analog signal's apparent extra sweetness, by tilting the digital frequency response down about 1 dB through the midrange. (It's surprising how small a change in midrange frequency response can noticeably affect harshness and stereo imaging!) Also, without expansion, the digital signal seemed slightly more reverberant than the analog.

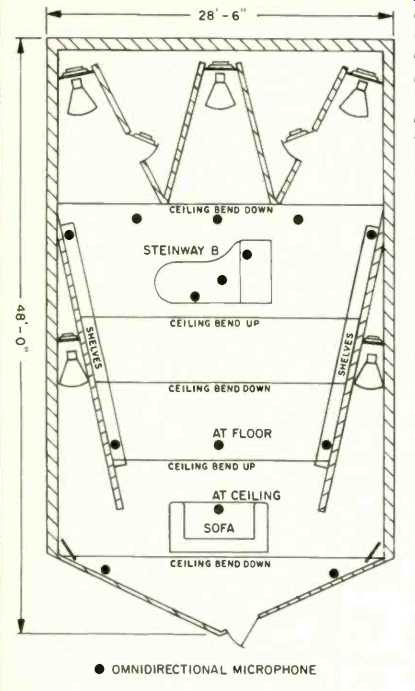

What about the PCM-F1's limitation to two channels? Until recently, I have never been able to find a combination of two front channels plus time delay that equaled my genuine four-channel recording. But after a month of experimenting with piano microphone techniques, equalization, and time delay, I finally arrived at setups for two-channel piano recordings which I find as satisfying as four-channel (Fig. 1).

Fig. 1--Microphone setup for two-channel piano recording in the author's studio.

For jazz, pickup is mainly from the three microphones over the piano. For classical

recording, most sound is picked up at the junctions of the rear walls and ceiling;

each microphone is equalized individually, with 6 to 27 dB of boost at 20 Hz

and 6 to 18 dB at 20 kHz.

The 14 omnidirectional microphones at varying distances from the piano simulate wall reflections, making the piano sound larger.

Digital piano recordings can be better than analog ones, because there is no wow and flutter (most audible on the piano's sustained tones), and relatively little noise during signal. When there is little or no signal, my analog system with companding noise reduction has less noise than digital. But at high signal levels, the companding system gives only a few dB of tape improvement. To the average ear, the high-level signal masks the noise.

However, after years of designing noise-reduction systems and listening for their imperfections, I can tell that the noise is still there at high levels, and that digital signals are cleaner.

My measurements of the PCM-F1 basically confirm the maker's claims, including a huge signal-to-noise ratio for a tape machine (in the 16-bit mode, 93.3 to 94 dB unweighted, 95.5 to 95.9 dB with A-weighting and filtering below 20 Hz and above 20 kHz). But I find the S/N marginal for live symphonic recording, and I would welcome another 10 to 15 dB. Because this dynamic range is barely sufficient for my purposes, many recordings must closely approach overload, and an accurate peak-level indicator is essential. Sony's bar-graph indicator is accurate from 0 to-10 dB, but its resolution of 3 dB per step at lower levels is insufficient for my use; I use my own peak-reading analog meters, calibrated with a tone. Sony's "Over" indicator reads the signal after high-frequency pre-emphasis, and so is an accurate clipping indicator. But the bar-graph fails to read peaks on some high-frequency signals which clip after pre-emphasis, such as trombone or cymbal, and may read as much as 5 dB too low.

Square-wave tests showed both ringing at 22 kHz (to be expected when all harmonics above that frequency are cut off by the anti-aliasing filter) and an 11-mS time delay in passing through the encode/decode and error-correction systems. I tried using the PCM-F1 as a short, wide-band time-delay system, to add some liveness to stereo signals. Results were best when I fed some of the PCM-F1's left output into the right channel, and vice versa, but the effect was small, because of the short delay. Increasing the feedback enough for a reverberant effect caused buzzing, due to repetitive delays at 11- and 22-mS intervals.

The PCM-F1 system reverses signal phase 180°. However, in my live studio, where I'm equipped to reverse phase, I have not yet found any musical program material on which I can hear any effect.

I was particularly impressed by the lack of visible distortion on 'scope traces of sine waves near the noise level-confirmation that the dither signal really randomizes bit-level distortion. At 14 bits, the bit steps and distortion were clearly seen, but at 16 bits the waveform showed only noise. The system even preserved a 20-Hz sine wave below the wide-band noise level.

It is in this low-level accuracy and flatness of response near the high-frequency cutoff that I expect measurable and audible differences between equipment.

A nice feature of the Sony PCM-F1 is that you can make perfect copies in video form, using its error-corrected copy output. One of my Burwen Studios demonstration tapes includes a fourth-generation copy made this way, and I cannot hear the slightest difference from the original. Editing, however, is pretty much limited to copying selections from one machine to another, and you have to cope with a 4 second start-up delay, sometimes by using the pause control and risking tape wear.

The main reason digital recording does not sound the same as analog is imperfection in the analog recording system. A digital recording has the potential of sounding better than analog, but only when you have refined your recording techniques and found the optimum equalization-in my case, very slightly closer miking and a bit more bass.

(Source: Audio magazine, Dec. 1983)

Also see: Doug Sax: On Current Record Technology and Music Systems of the Future (Mar. 1980)

How We Hear Direction (Dec. 1983)

= = = =