by Gerald Stanley & David McLaughlin [Crown International, Elkhart, Indiana]

THIS ARTICLE has been written to suggest a more helpful way of reporting amplifier distortion specifications to the prospective buyer. The comparative merits of two types of distortion testing will be presented, leading to the conclusion that one test shows some clear advantage over the other.

The widespread appeal of high fidelity has been strengthened by the broad range of equipment currently available. The increasing variety is an undisguised blessing since it allows every enthusiast the freedom to satisfy personal tastes in building his own system. Unfortunately though, the process of purchasing a system becomes more complicated with this diversity. The expense involved and the individual nature of a high fidelity system usually results in a great deal of evaluating and comparison by the careful customer, who naturally wants good performance for good money. Any performance information that can make the evaluation easier will be very beneficial.

Obviously, the effective communication of technical performance data is not an easy thing. First of all the information has to be presented in terms that the buyer can easily handle. After all, why should it be necessary for someone to have an intimate knowledge of audio electronics in order to make an intelligent purchase? It is also important that standard terms be used by all manufacturers in order to facilitate comparisons between different brands of equipment. Finally, since knowledge and equipment fall short of perfection, a constant updating of test procedures becomes essential. Unfortunately for the customer, the information he needs does not come from all manufacturers in a standard form, and traditional rating methods give way very slowly to more effective practices. This is not to say that current performance specifications are not helpful in making buying decisions, but to point out that they could be more helpful.

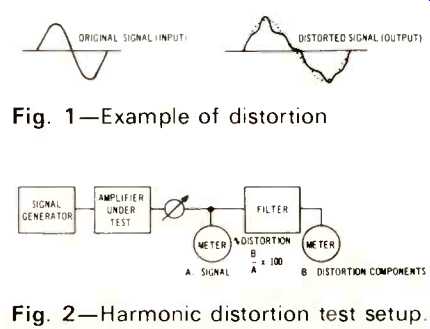

One of the most often quoted and inadequately defined technical specifications in the audio field involves the distortion produced in audio amplifiers. The term "distortion" covers a multitude of audio evils. Basically it describes a change in the original signal introduced by the electronic and mechanical equipment employed in reproducing the signal. An example is drawn in Fig. 1. The goal of any audio reproduction system is to approach to a greater or lesser degree (for a greater or lesser number of dollars) a perfect duplication of an original production of a piece of music. The sound of the original performance, whether it comes from Van Cliburn or Flatt and Scruggs, becomes the standard. Any changes in the original sound can be described as some form of distortion.' In the process of translating an original performance onto a disc or tape, a certain amount of distortion is introduced. When the record or tape is played, the high fidelity reproducing system introduces other subtle changes and the resulting sound moves a little further from the original. As you would expect, the degree of change or distortion depends on the overall quality of all equipment that has been used in the process. Interestingly enough, the distortion may not even sound unpleasant, but as long as it represents a difference from the original, it is distortion by definition. To minimize overall distortion, then, it is generally important to have each piece of equipment in a system` produce a minimum amount of distortion. This seems rather obvious, but because of the difficulty of comparing distortion specifications which are stated differently, it is not always easy to determine which equipment will produce the least objectionable distortion.

Fig. 1-Example of distortion; Fig. 2-Harmonic distortion test setup.

Two basic methods have come into use for measuring distortion in audio amplifiers. Unfortunately the results of one method do not necessarily indicate the results of the other. To compound the problem, the methods are used with varying degrees of thoroughness, which give varying amounts of useful information about the performance involved. Simply stated, the truth is that the buyer is not being helped as much as he could be.

To begin with, the problems involved in evaluating amplifiers are rather significant. For instance, distortion tests are not performed while the amplifier is actually handling music. (See "Amplifier Q's and A's-Mainly for Beginners" in this issue.) Of course, the equipment will actually be used to play music, but the situation is too complex to permit a practical distortion test to be performed. Therefore, the actual testing requires some simplification. The test conditions must also be standardized, so they can be duplicated accurately by anyone wishing to check the results.

Supply voltages, signal level test equipment capabilities, loads and so forth must be specified wherever they have a significant effect on the outcome of the test. When these variables are defined, more meaningful comparisons can be made between different brands of equipment. The test should also illuminate thoroughly the particular qualities (good and bad) of the equipment being tested. For instance, a test could be made which would show excellent performance at a particular operating point of an amplifier while completely ignoring the performance at other equally important operating levels. To summarize, a test should be a simplified and repeatable version of actual operating conditions, which adequately covers the range of performance expected. Both of the methods commonly used to evaluate audio amplifier distortion are simplified, repeatable versions of actual operating conditions, which can be used to check the whole range of expected performance. One, however, offers particular advantages in the evaluation of modern audio amplifiers.

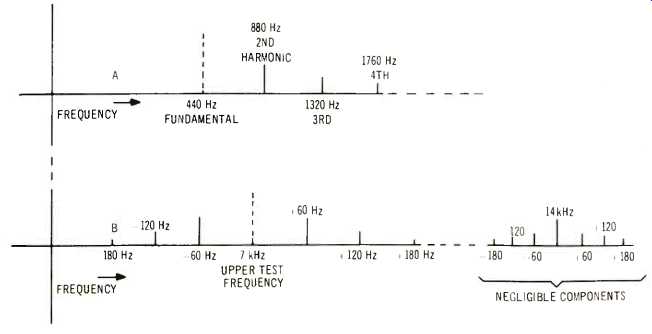

First, let us consider the traditional method-harmonic distortion testing. This involves evaluating an amplifier's performance as it handles a one-frequency signal. The complex musical signal is thus approximated by a single frequency. The test signal must be as free of distortion as possible, so that its inherent distortion is not confused with the distortion introduced by the amplifier. Essentially, the test signal is passed through the amplifier and the resulting output signal is checked for changes from the input. The general arrangement of equipment is shown in Fig. 2. The output signal is measured, after which the original test frequency is filtered out. The remaining output signal measured, on the assumption that what is left over consists of unwanted distortion components added by the amplifier. As typically produced (ignoring effects of hum and noise), this distortion is called harmonic distortion because the unwanted additions to the single tone can be separated into the harmonics of that tone. By way of illustration, if 440Hz is used for the test signal, the second harmonic will be 880Hz, the third harmonic will be 1320Hz, and so on. In this case, harmonic distortion measurements should indicate the prominence of these distortion frequencies: 880Hz, 1320Hz, etc.

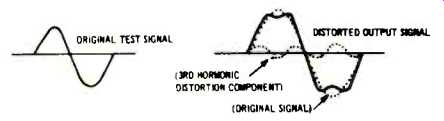

Figure 3 gives an example of the appearance of harmonic distortion on an oscilloscope display. Figure 4a shows where the harmonics appear on a frequency spectrum. Some idea of the relative sounds involved can be gained by sitting down at a piano and sounding middle A (440Hz). The first harmonic (880Hz) would be A one octave higher.

The next octave would produce the third harmonic (1320Hz) and so forth. Since these are all in harmony, playing them together will obviously not produce an unpleasant sound, but the sound will definitely be different from the sound of middle A alone. In the actual test, a wave analyzer may be used to look at each of the harmonics individually, to see how much each contributes to the total distortion. In some equipment, the second harmonic may be the largest component, while in other equipment the third or some higher harmonic may contribute the most. Usually a total harmonic distortion (THD) figure is stated, which ideally expresses the rms sum of all the harmonic distortion components together as a percentage of the rms fundamental signal.' For the sake of thoroughness, the tests should be repeated at different frequencies and at different power levels, although this takes much more time and effort.

Fig. 3--Example of harmonic distortion.

Fig. 4--A, Harmonic distortion components, B, intermodulation distortion

components

Fig. 5--Example of IM distortion

Fig. 6--IM distortion test setup.

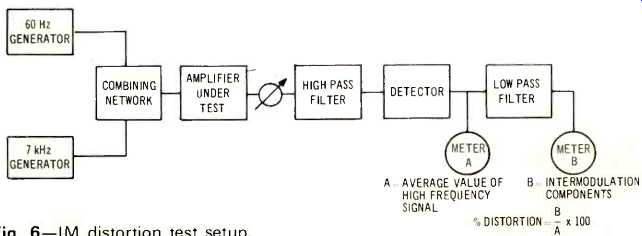

The second and acoustically more relevant method of distortion testing measures intermodulation (IM) distortion. This type of test evaluates amplifier performance as it handles a two frequency signal. The complexity of a musical signal is again simplified, this time being approximated by the interactions of two frequencies. As defined by the Society of Motion Picture and Television Engineers (SMPTE), the IM distortion test signal is made up of two frequencies in a 4:1 amplitude ratio of low frequency to high frequency. Typically the two frequencies are 60Hz and 7KHZ (Fig. 5). The general test arrangement is shown in Fig. 6. The output from the amplifier passes through a filter which removes the low frequency (60Hz) test signal. The remaining output, consisting of the high frequency test signal (7KHZ) plus the distortion modulation components, is AM detected", after which everything but the distortion products is removed by a second filter. The distortion modulation components are measured and expressed as a percentage of the total AM detected signal. The primary distortion measured comes from the interaction of the two test frequencies. The 60Hz frequency will modulate the 7KHZ frequency and form sum-and difference frequencies, such as the sum of the two (7060Hz) and the difference between the two (6940Hz). Other sum and-difference frequencies will also appear involving the harmonics of both frequencies. Figure 4b shows what some of these will be on the frequency spectrum. For the purpose of practical measurement, only the distortion components around 7KHZ are significantly large and these are the ones measured as distortion. Figure 5 shows how IM distortion of the test signals might appear on an oscilloscope display. Again using the piano to illustrate, an idea of the kind of sound involved here can be gained by sounding middle A again, and then sounding middle A along with the white keys on either side of it (G and B). These two notes are between 50Hz and 60Hz different from A, and when played together with A, demonstrate the kind of dissonance resulting from intermodulation distortion.

Depending upon the particular conditions of the test, such as the characteristics of the equipment being tested, the frequencies used may be changed and the 4:1 amplitude ratio between frequencies may vary. Generally the 4:1 ratio of 60 Hz and 7KHZ is used because it provides a realistic example harmonic ratings suggests that the customer would prosper if one method were consistently used, in which case he could make meaningful comparisons.

For a number of reasons, IM testing is the logical choice.

To begin, there are significant weaknesses in the harmonic testing procedure. First, the harmonics detected as distortion are not always offensive to the listener. The piano experiment suggested above should illustrate this, along with the fact that musical sounds are frequently made up of harmonic combinations. Second, the single-frequency test signal does not resemble typical program material and the results do not indicate the kind of complex interactions that occur between different frequencies. This can result in ignorance of serious deficiencies in the equipment.

Third, the usual THD figure groups all harmonic components together, which can mask the fact that most of the potentially offensive distortion comes from high order (higher frequency) harmonics'. An amplifier generating mostly high order distortion products may then sound worse than another with the same THD rating which produces lower order distortion. Fourth, THD measurements group noise along with harmonic components and thus of the musical situations for which an audio amplifier is designed. IM tests should be run at a wide range of output power levels to reveal problems that may show up only at particular levels.

As an example which will be discussed in more detail later on, IM testing shows excellent sensitivity to low power crossover notch distortion, which is a traditional sore spot of some solid state amplifier designs.

Now that we have briefly discussed both methods, you might naturally ask how they are related, but this is not a simple nor brief proposition. A great deal of discussion has been published' with impressive mathematical support to describe this elusive relationship, but the results do not apply to most equipment. Several common (and sometimes desirable) characteristics of electronic equipment can each or all remove any predictable relationship between IM and harmonic distortion. At a given peak power level, and within the normal operating range of high fidelity amplifiers, IM distortion typically runs from two to six times as high as harmonic distortion. In any individual case, however, it is necessary to run both tests if both harmonic and IM figures are needed. This lack of a simple means by which to compare IM and may produce a mischaracterization of a product. Fifth, harmonic distortion testing instruments may have residual distortion levels above the distortion levels of the amplifiers under test. It is difficult to inexpensively produce and analyze a test signal with distortion lower than state-of-the-art audio amplifiers. Sixth, the test procedure is unwieldy. In the usual process, some fine tuning is involved to completely remove the test signal before the harmonics are measured, a procedure which needs to be repeated at different frequencies, and then at different power levels for each frequency. This results in a sensitive operation being performed many times for a single piece of equipment.

Many of the aforementioned weaknesses could be lessened by the use of a wave analyzer, but this would not help the problems of expense and time involved.

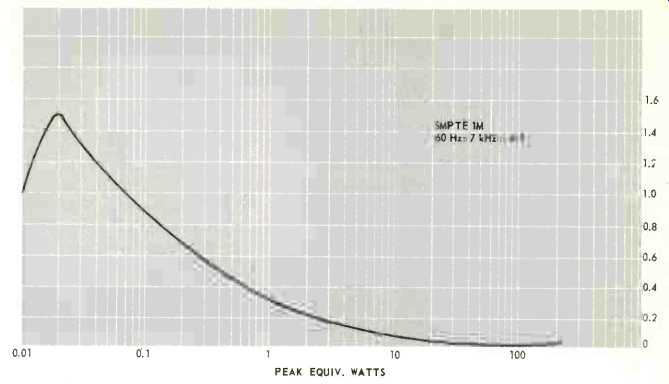

Fig. 7--IM evidence of crossover notch at low power.

In contrast, IM testing offers clear advantages over harmonic testing. First, the sum-and-difference frequencies detected as distortion by IM testing are not harmonically related to the original signals and therefore constitute a much more audibly obnoxious type of distortion (as suggested by the piano experiment). Second, the use of a two frequency test signal provides a simple but more realistic approximation of musical material, and the test results indicate the interactions between frequencies that can be expected in actual use. Third, the use of the 4:1 SMPTE amplitude ratio gives an inherent prominence to more audible high-order distortion products, which in turn brings about better agreement of IM test results with listening tests'. Fourth, SMPTE IM measurements concentrate on a relatively narrow band of frequencies around the upper test frequency, a situation which serves to keep hum and other noise out of the final test results.

Fifth, it is possible to obtain reasonably priced IM distortion measuring equipment with residual distortion levels below those of state-of-the art audio amplifiers. Sixth, since there is no tuning needed to filter out the test frequencies and since two frequencies in combination provide a test for the entire audio bandwidth, the only change necessary during the test is in the power level.

With proper equipment, IM testing can thus be done very quickly and efficiently.

Despite these advantages, IM distortion testing has found limited use and has sometimes been used to poor advantage. It is most important to cover an adequate range of power levels if an amplifier is to be thoroughly tested.

As mentioned before, crossover notch distortion has plagued many solid state amplifiers. Fortunately, this type of distortion generates high order terms which quickly show up in SMPTE IM measurements if the tests are made at the levels (as low as 10 milliwatts) where crossover problems occur. Testing down to a level of 1 watt (a commonly used lower test limit which is frequently understood by the expression "all power levels below rated output") hardly ever reveals the cross-over notch distortion (e.g. In a 100 watt amplifier this is only 20dB below full output whereas music may cover 70dB.) Figure 7 illustrates the kind of IM increase that can occur at low power levels.

To summarize, harmonic distortion testing, on the surface is very simple conceptually and can be useful in equipment for which SMPTE IM testing would be inadequate (such as a graphic equalizer where low and high frequencies follow different signal paths.) But for many situations and in particular the case of audio amplifier testing, IM distortion measurements offer distinct advantages both to the manufacturer and to the consumer. Due to the simplicity of such tests with a modern, inexpensive IM analyzer, serious customers should insist on a fully documented plot of IM distortion versus output power, a request which quality manufacturers will happily fulfill.

1. A separate problem is noise, which involves the addition of unwanted sounds not related to the sound being reproduced, such as hum from power supplies, etc. Important kinds of distortion included in the definition above, but not considered in this discussion, are phase distortion and amplitude distortion. Phase distortion deals with the shifting of the complex relationships among the different tones of a musical signal and is generally much more subtle than harmonic or intermodulation distortion. Amplitude distortion results from variation of gain with frequency and shows up as poor frequency response.

2. This assumes that the equipment has been chosen so that the different components will be compatible with each other, or noninteractive. (For example, damping factor is a measure of non-interactiveness of amplifiers and loudspeakers.) Otherwise, some part of the system will be improperly loaded or driven and distortion will occur regardless of the quality of the equipment.

3. Commercial THD analyzers actually measure the average of the distortion signals taken as a percentage of the average distorted amplifier output, rather than measuring rms figures.

4. This operation in effect demodulates the high frequency signal from the high pass filter. From the demodulated signal an average value is taken as a reference for the percent distortion. The intermodulation components (low frequencies) are then separated from the high frequency by the low pass filter.

5. See, for example, Callender, M.V. and S. Matthews "Relations between Amplitudes of Harmonics and Intermodulation Frequencies," Electronic Engineering (June, 1951), P. 230, and D.E. O'N. Waddington "Intermodulation Distortion Measurements," Journal of Audio Engineering Society (July, 1964), Vol. 12, #3, P. 221.

6. Shorter, D.E.L. "The Influence of High Order Products in Non-linear Distortion," Electronic Engineering (April, 1950), P. 152.

7. Callender and Matthews, op. cit.

8. See also "Amplifier Requirements & Specifications," AUDIO, (April, 1971), Vol. 54, #4, p. 32.

(Audio magazine, Feb. 1972)

Also see:

Testing Amplifiers With A Bridge (Mar. 1972)

Fail-Safe Audio Amplifier Design (Feb. 1973)

Buying Watts and Other Things (Feb. 1973)

Transient IM Distortion in Power Amplifiers (Feb. 1975)

FTC Power Ratings: An Optimistic View (Feb. 1975)

Amplifier Q's and A's--Mainly For Beginners (Feb. 1972)

= = = =